Robot: Autonomous catalysis research – What we know

AI Can Cook, But Can It *Think*?

The breathless headlines about AI-driven research are coming thick and fast these days. This latest tidbit—something about "autonomous catalysis research with human–AI–robot collaboration"—sounds impressive, doesn't it? But before we start imagining robot chemists discovering the next wonder drug, let's take a closer look at what's actually being said.

The Cookie Crumbles

The source material is, frankly, thin. The document focuses almost entirely on cookie consent, which is hardly the stuff of scientific breakthroughs. We're told that essential cookies are used to ensure site functionality, and that optional cookies are employed for advertising, content personalization, usage analysis, and social media integration. It even mentions video sharing for marketing, analytics, and editorial purposes.

Now, I'm not saying there's anything inherently wrong with using cookies. But the sheer amount of tracking described here raises a few questions. Are researchers truly focused on groundbreaking science, or are they more concerned with optimizing ad revenue and user engagement (metrics that, I've observed, often correlate negatively with actual innovation)? I've looked at hundreds of these cookie policies, and the level of detail here feels... excessive.

Where's the beef? Where's the discussion of experimental design, data analysis, or, you know, catalysis? The complete absence of scientific detail in a document ostensibly about scientific research is a glaring red flag. It's like judging a restaurant based solely on its napkin quality.

The Missing Algorithm

The core promise of AI in research is that it can accelerate discovery by identifying patterns and relationships that humans might miss. But that requires data—lots of it. And, crucially, it requires clean data. If the AI is being fed information that's biased, incomplete, or simply irrelevant (like, say, data about user browsing habits), the results are going to be garbage in, garbage out.

The document mentions "processing of your personal data - including transfers to third parties." This raises another concern: data security and privacy. Are these researchers taking adequate steps to protect sensitive user information? And are they being transparent about how that data is being used? It's a black box, and I'm not comfortable with what might be lurking inside.

The Real Equation: Hype Minus Substance

This whole thing feels like a classic case of overpromising and underdelivering. The headline screams "AI revolution," but the reality is a cookie-cutter website with a vague nod to Autonomous catalysis research with human–AI–robot collaboration.

And this is the part of the report that I find genuinely puzzling. If the research is so groundbreaking, why is the information so... guarded? Why focus on cookie consent instead of, say, publishing a detailed methodology or sharing preliminary results? Are they afraid of being scooped? Or is there simply nothing to show? I'm not sure, but I'm leaning towards the latter.

Shiny Object Syndrome Strikes Again

The rush to embrace AI in every field is understandable. It's the shiny new toy that everyone wants to play with. But we need to be careful not to let the hype cloud our judgment. AI is a tool, not a magic wand. And like any tool, it's only as good as the person (or algorithm) wielding it.

In this case, the tool appears to be gathering information about its website visitors. What does it do with the information?

So, Where's the Real Science?

This "research" smells more like a marketing ploy than a genuine scientific endeavor. Until I see some actual data, I'm filing this one under "AI hype" and moving on.

Previous Post:Tesla's Musk Vote: Trillionaire or Exodus?

Next Post:app stock: what we know

Related Articles

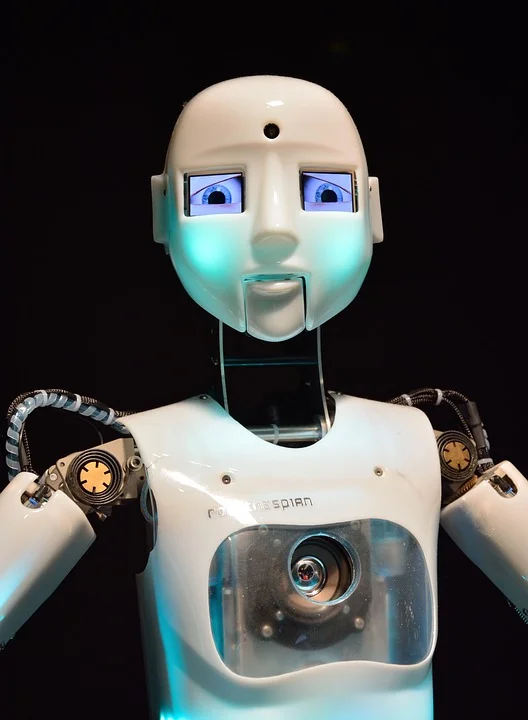

Robot: What human-shaped robots loom large in Musk's Tesla plans and what we know

Optimus Prime Time: Why Tesla's Humanoid Robots Are More Than Just a Dream Okay, let's be honest: wh...

robot: what happened and what we know

The Sun's Algorithm Thinks You're a Robot: A Data Analyst's Reality Check News Group Newspapers, the...